Used by permission of the Publishers from ‘Phill Niblock’, in The Ashgate Research Companion to Experimental Music edited by James Saunders (Farnham: Ashgate, 2009), pp. 313–329. Copyright © 2009

Phill Niblock has been developing his layered drone pieces for nearly forty years, working with multi-tracked sampled recordings of solo instruments that combine to produce a vibrant beating of fractionally detuned difference and sum tones. Heard live, the physical impact of his work is powerful: the chaotic richness found within the wall of sound he presents takes time to emerge, but once attuned to reveals an interweaving of dense oscillating counterpoint. The scale of his pieces is important too in this regard: most average around 20 minutes, a duration which is essential for this attuning process. I first heard Niblock’s live performance in Ostrava in 2001. He was midway through his annual European concert tour and spent a morning playing five pieces accompanied by his films of people working. Although I had heard some of his music on CD previously, this had not prepared me for its live performance. As with my early encounters with the work of many of the people interviewed here, it was an experience which changed how I thought about music.

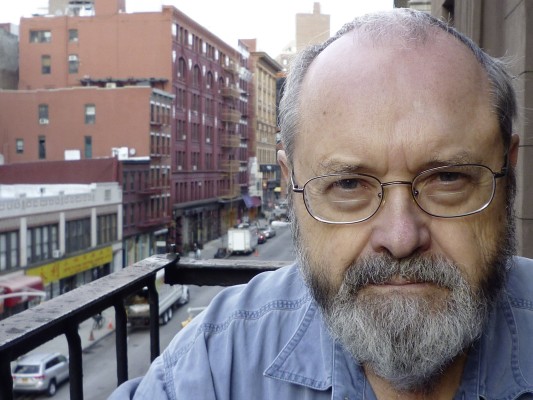

The interview was conducted by telephone on 11 May 2007.

I thought to begin perhaps we could talk a bit about the overall sound of your music, and I wondered what is it about drones and sustained textures which interest you as a composer in particular?

It’s about sound and recording I think. I began to really listen to music in 1948 and to listen to recordings and to collect records and in the early 50s. It was exactly the time when LPs came out in 1950 and it was possible to have home hi-fi systems and everything seemed to work together. Tape also, the first tape recorders from the late 40s – so that sound began to be almost immediately much more like the sound you hear when you go to concerts, I mean much more realistic and less canned sounding, and so that was all a big influence on sound. I built my first hi-fi speaker system in 1953 which is still functioning in New York (it sounds great!) My first tape recorder, the first fairly cheap amateur tape recorders came out around about ‘53 and so I actually began to work with tape and recording at that time. So the whole business of sound and the reproduction of sound, because my music is very much about reproducing sound in the space which sounds and…well I mean there isn’t really a live sound of my music. It is reproduced sound basically because it comes from recording. That’s the whole idea for me – being able to record sound and to play it back in a way which makes it sound bigger and fuller than it does if it were played live.

What was it about recorded sound and use of that technology which really led to you working with drones? Was it something to do with the layering of sound perhaps?

I guess the initial idea was to work with this thing of using tones that are close together in pitch to produce other harmonic variations and that only works very well with drones I think. So I’m not sure which came first, the drones or the idea. But it all comes from sound of course and reproducing sound.

One of the things I want to talk about is how you make a piece, and perhaps as a starting point for that could you explain how you go about choosing instruments or how that happens in terms of how you work?

Well the thing about using different instrument is that each instrument has a different timbre and so that creates a whole different set of overtone patterns and that’s probably the most major difference between one piece and another piece since they’re all drones and all using microtones. There are some internal changes of structure in the course of the piece, but a big part of it is the fact of these instruments having different timbres, and sometimes even the nuances of recording change that immensely. There’s a piece for acoustic guitars played with e-bows which we recorded with a single microphone very close to the guitar and the sound that results was certainly extremely pure, and had a very sine wave kind of quality almost, and so there were an incredible number of beat patterns which occur just because of the purity of the tone. There’s another recording of a piano and it’s recorded very close up but there’s sort of no fundamental there – no sort of sine wavy fundamental – so that the tone that’s produced is so sharp edged and varied that even when you make microtones close to each other it doesn’t really produce any difference tones. I mean it sounds great but it doesn’t work well in terms of being a piece of mine with microtones. That’s just two examples.

Do you tend to aim for lower range sounds in general due to the need for a strong fundamental?

Well I think that depends on the instrument because for instance in the piano piece we were using an imperial Bosendorfer so we had lots of low sounds and it makes this really marvelously full clangorous sound. I mean I also work with flutes and stuff which don’t have low sounds. I made a piece recently for soprano saxophone and I actually pitched the sound one and two octaves down so there’s a real bass tone but all of the sound comes from the soprano but it’s all a manufactured two octave down sound (made in Pro Tools with pitch shift) which makes the piece sound strange but there’s these bass notes and then floating over that there’s much higher pitched soprano saxophone sounds.

What is it in particular which attracts you to certain sounds? Do you approach instrumentalists because you want to write a piece that uses saxophone for example, or is it more the other way round with people coming to you, and you finding projects which are interesting?

I guess part of it starts with the musician. When I find people that I’m interested to work with and then I tend to make pieces with them because I know they’re going to sort of work out. For instance I’ve made six pieces with Ulrich Krieger from Berlin playing saxophone and didgeridoo and that’s just an amazing idea to make that many pieces for one musician. I did make probably that many pieces at least, for David Gibson the cellist, from the mid-70s until the mid-80s. The cello is just an amazing instrument, and he is an incredibly good player for me. He knew very much what to do, what to produce and so that’s part of the thing that’s just working with different musicians. I mean I’ve now pretty much exhausted most of the range of instruments so what else to do?

When you are working with a player how do you decide which sounds to use, which pitches for example, but more importantly which sorts of timbral quality do you look for in the sound of an instrument?

I tend to talk to them about what notes are particularly resonant on the instrument and on some instruments, like cello, that really varies from cello to cello. And then pick some notes which use that resonance and then probably a small cluster around it. I tend to work quite a lot with three half-tone intervals particularly in recent years. So that’s sort of basically it, just talking to them and finding out what’s going to make the instrument sound good.

So you want the instrument to have a good resonant quality to it essentially?

Yes. Because the resonance is the richest overtones.

When you have actually recoded your samples, what’s your next stage? Do you use everything that you have recorded or do you edit it at all? How do you take it from that point to make a piece?

OK, I guess I’ll talk through the stages then. I go to a recording studio, which can also be quite a small studio. For instance the last set of pieces I did with Ulrich Krieger we recorded in his apartment and he lives in the top of a building and it has a very broken paneled ceiling that’s peaked in various places and so that it turned out that it was a very good sound space and the sound we got using just a single mono condenser microphone was really very nice for those pieces. So part of it then is the recording studio, the recording medium, and who is engineering, if anyone is. I just made a recording which I’m actually looking at, listening to right now, with a church organ, a baroque organ in a town outside of Krems, Austria, and the sound was very good. I was using one large diaphragm AKG microphone to record it and going directly onto the hard drive from Pro Tools so it’s a very direct recording. So after I’ve recorded the material, I start to edit. I would delete samples that did not work. I would take out some clicks and pops if they occur or if there is a splutter at the beginning of the tone I might just fade it in. There’s normally quite a bit of difference in level between the sound samples, so I might try to even out the levels by raising some and lowering others and then normally after all that editing is done I bounce to disk. I rerecord the material with the edits intact. Then from that material I begin to create the regions (the sort of tones that I use) and then I make a list of those and then I’ll start manufacturing some pitch shifts in the computer itself.

How long are the sample tones that you tend to take? Is there a duration you aim for, or is it variable?

Most wind instruments can hold a note for about 15 seconds and strings if they’re playing fairly slowly between 10 and say 12 seconds or something like that, before a bow change.

So really it’s as long as you can with a sustained sound?

But what I tend to do with strings is to record say a minute or so and leave the bow changes in, and if they are fairly smooth then that works out quite well. So if I were recording a wind instrument I might record say ten examples of one note. I would edit out the samples that were with errors, and trim others. In addition, I take out the breathing spaces between samples, leaving the trail-offs and the attacks. After the editing, I would have one to three minutes of each note, and that becomes a named ‘region’.

Once I’ve got the regions set up I just start to think about the whole structure of the piece. By that time I’ve listened to the material and I have an idea of what might really sound good and what maybe doesn’t sound so good. There’s a piece for hurdy gurdy , with Jim O’Rourke playing and the hurdy gurdy had been somewhat damaged so there was a clunk every turn of the wheel and I recorded a whole bunch of material and I thought I wouldn’t be able to use it because it was so marred by these clunks. Then I found one note which really sounded good and I did some octave changes and a lot of pitch shifts and so I constructed the piece from that one note for the first nine minutes and then I just threw everything else in at the end for six minutes! And it’s a piece which, considering I didn’t think I was going to be able to finish the piece with that material, that piece I admire very much and I tend to play it a lot particularly at the beginning of a concert. It’s a very good first piece to play.

How long does it take you to make a piece, start to finish, once you’ve recorded your sounds?

It can be a couple of days. One phase is recording the sound material/samples. That’s usually a session of two to three hours – but then it takes maybe a day normally to do the editing to get the regions all in shape and then another day or two to put it together. It depends what else is happening (in life) so it’s difficult to put an exact time. The guitar piece went slower just because I had this technical difficulty and I kept stopping and working on it and coming back to it and trying to figure out what I was doing wrong and then I realized I was only nine days from the performance of it and it was really going slow! Then I really speeded up and solved the problem and put the piece together. There’s no set answer as to how long it takes.

So after you have edited the material, does the structure emerge at that point in the process of writing or do you ever plan it in advance?

It’s mostly a matter of listening to the material and deciding what’s really interesting and then trying to figure a structure around that. I just finished a piece that was for me extremely long, it was 59 minutes and it really did make a huge difference in the way that the structure of the piece went internally. I normally make pieces which are about 20 minutes long. I like very much that time length as it allows me to play several pieces in a concert. If I play for an hour and a half I can play four pieces for instance, which is good, then it gives quite a different sound quality for those four pieces. And so a 59 minute piece, well that’s pretty much a concert!

How did the structure of that piece differ from your other pieces? Perhaps as a preface to that question could you say how the structures tend to work in your 20-25 minute piece? I’m always aware of the comment Feldman made about his generation being hung up on the 20-25 minute piece and it became their clock in the way that they managed to always articulate that length of time, and I’m interested in the fact that most of your pieces are around that sort of duration.

And he also says something about longer pieces – that it really changes your whole sense of form and there was a very interesting statement that he made and I’ve forgotten the terms that he used! I really like the 20-minute piece so I’m quite satisfied with that and a lot of it has to do with simply being able to play several pieces in a concert and I’m mostly doing solo concerts. I very seldom play a piece within a concert of four different composers.

How do you tend to articulate a 20-minute span?

I try to make it different each time! Sometimes it isn’t much different except for the timbral qualities of the particular instrument.

I think there’s a very clear difference in pacing often and I wondered how you went about doing it? Is it an intuitive thing or is it more planned?

Well hmm…I guess I could say something about this 59 minute piece. There are several things interesting about it. I recorded two guitars in stereo and they remained in stereo so that one guitar is always on the left, the other guitar is always on the right and the chord is three half tone intervals so that the left guitar gets the lowest note and the middle note and the right guitar gets the middle note and the highest note. And we recorded a number of octaves of each note as well. I started the piece with the central note which was an F and it goes for say ten minutes before any other note enters and without too many pitch bends so that it’s sort of gently rolling and phasing but there’s not a lot of variation in that first ten minutes and then the first lower note comes in and it’s a half tone interval that beats really very fast with the F and so then I start making a lot of pitch bends right after that so that the beat isn’t a constant beating and it becomes very much modified by the other microtones that are happening. So it becomes a very varied tempo (beating pattern) instead of a very singular one. At 20 minutes it becomes quite thinner in sound and fewer tracks are utilized and then it becomes only the outside notes so that each channel has a different note so that the left channel is playing E and the other is playing an F#. So we even hear these two different notes staying completely separated in the stereo, then it gradually begins to mix in the other, central note and it manages to continue changing every few minutes considerably. When you’re playing this thing in Pro Tools you can stop it and then advance say five minutes and start it again very quickly which you can’t do with a CD because I didn’t put any programme numbers in which would make that possible. Probably if it comes out as a recording I would do that – I would put in programme numbers so that you can advance through the piece, and if you do that and play, say, every five minutes the difference in sound is incredible. I was amazed how much it changes because when you are listening to it as a continuum it sounds like drones and you may think that, well, it must sound different from other periods of time, but the actual difference in the sound quality is quite amazing.

That’s something I’ve always liked about your music. The moment you are in seems fairly stable but as you say when you jump a minute ahead or a minute behind it’s enormously different but the changes are so slow, subtle and gradual.

You also just mentioned using Pro Tools and I wondered how using the computer affects how you write? Obviously it’s your tool for making your piece – I think I read previously you used tape technology and I wondered whether that’s actually changed the way you work in any way or even just made you more prolific perhaps?

I think it changes a lot. It particularly makes it much faster to work, except for this guitar piece where I had some technical difficulties because of the stereo. I’d never actually made a piece using separated stereo recordings of samples, it was always mono, and I was having some trouble mixing this stuff down because it kept going to mono and it turns out that some of the pans were slightly off and they were converting the stereo files to mono. But then once I solved the problem, it was a matter of very few days to actually finish the piece.

I began working, in the beginning, in the 1960s, with a stereo tape recorder. In fact, the first piece was recorded with a mono full track machine. And then I managed to get a quite good Revox stereo machine and so I was dubbing stuff back and forth to build up tapes from different tape recorders. I began to work not too long after that on multi-track recorders. Actually I had access to a 16-track Ampex in Boston in the early 70s and made a number of pieces. I would simply go to Boston and spend the weekend, just go in to the studio on a Friday night when nobody was using it and stay up for about two days and work and sleep on the floor if I had to sleep. So that was amazing to be able to really use a multi-track machine which sounded very good. Then I went through a succession of four-track and eight-track Teac machines. Some time in the mid-80s or so I pretty much stopped making music for several years and started again in the very early 90s, so there was a period of about five years which was sort of blank of compositions. And then I was working more with computers but having someone else actually do the technology so I would make a score, I would record the material, edit the material and make a score, then they would sort of build the piece from that score. But in ‘98 or so I began actually working in Pro Tools myself and then it was just very fast work. And it was also possible, instead of recording the microtonal intervals, to manufacture them in Pro Tools. And they sound quite good, so in the end the piece sounds good. I mean the digital recording process is amazingly different.

So was there a difference between the pieces pre-98 where you had to give the information about the structure of the piece to somebody else, to how you work now? Were they slightly more planned perhaps before that point?

Well I obviously had to finish the score before building the piece. In the original tape recording pieces / multi-track pieces – I actually made a score for the multi-track set before I began working with tape and then when I did work with the tape I simply dubbed the material from mono onto one track of the multi-track and just built the whole tape. So I never listened to the stuff and changed the material as I was building the recording. I made the score and I just manufactured the tape, then I listened to it as the mix. So one thing about Pro Tools is it’s very easy to do a little bit and then listen to some and then do some more and then listen to some. I’m not sure how much it really affects how I work. I think it has more to do with just the ease of working in Pro Tools and putting things in and also getting so many more tracks because the material is so much more thick than it was when I was working with multi-track. Building the piece in Pro Tools is simultaneous with making the score – the score appears before your eyes as you work!

How many tracks do you usually use?

Usually it’s 32 now, and in this recent piece it was 16 stereo tracks.

Do you think the reason also you are perhaps working more intuitively is because you have a clearer understanding of what you are trying to do in your pieces now than you might have done 20 years ago?

Yes. But I think basically I’m working pretty much in the same fashion that I worked even at the very beginning before I began working in multi-track. I mean, basically there’s a piece for tenor saxophone from like ‘69 or ‘70 made by dubbing tapes back and forth and technically it sounds remarkably the same as now, I think.

Obviously you just mentioned about producing sketches, notation, plans for your pieces – do you still do that at all, or is Pro Tools the only score you need?

The Pro Tools score, the actual Pro Tools edit window, is the score which occurs as I’m making it so the same kind of decisions I would have made on paper are now just made in front of my eyes on the screen.

So there’s never a need for any sort of other realization of the piece in that sense?

In terms of the score, no.

That brings us on to the performance aspect of your work and obviously you have quite a busy programme of concert tours going around the world playing your pieces. I wondered, given that it’s a recorded music, why it’s important to you to play your music live?

You can’t really hear my music the way it should be except in a concert and you can’t really do that concert without me! So I mean even I make some decisions during the concert set-up which are strange but there’s usually a reason for it, but really what you hire in having me come to do a concert is having the right sound of the music (or as close as I can get, under the conditions). You can buy the CDs – the same CDs I play from sometimes – but no one has the kind of system at home usually, except maybe for me, with which you can get the kind of sound that the music is all about. So when you buy a CD and play it on your home sound system it isn’t at all the music that I intend.

Do you see that as a problem?

Well sure it’s a problem. It’s the one reason why I never wanted to do recordings in the first place. In the 70s for instance it was because LPs never really sounded that good for my music. But it’s a problem that when I didn’t do records I simply wasn’t known. It’s always nice to have a couple of CDs out!

When a composer is heavily involved in the performance of their work it always creates a problem for the long-term presentation of their music. Have you thought about building up a performance practice around what you do – is that something which concerns you at all? How do you ensure future performances of your work are correct?

I don’t! Even if you did something like a DVD. Actually an interesting DVD of a performance situation and the only documentation that ever worked I think, was done by Jim Staley at Roulette in New York . He is doing a whole series of studio things that were eventually published on DVD and you can actually get many of them from him. There are pieces by Pauline Oliveros and I think Lucier and many different people from the improvising world as well. And they were all half hour shows and we did it in a studio. We were actually projecting my video on a large screen. There were three musicians playing guitars with ebows and I’m sitting at a desk with my computer also playing a part and the music is in the room but also then they could cut to the film direct (so not from the studio camera but directly from the video source) and they could light the musicians well enough so you could see the musicians and see what was happening and it was an amazing idea. You could see it and hear it. But I don’t see how that would help gain the right kind of sound for me even. I mean how do you specify that somebody has to listen to the sound quality making it loud enough but making sure the speakers are working and all of that stuff. But I don’t know, I don’t see any way to do it.

Talking a bit more about the whole setup of your performances in a live environment, is there an ideal space for your music to work in? What is it that works well, and have you been in rooms that don’t work particularly well?

I mean I’ve worked in so many different kinds of situations – with either rooms that weren’t very appropriate or sound systems that weren’t very good, but sometimes the sound system can be mediocre and the room can be great and it sounds really good, and sometimes it sounds horrible. I tried to play in Budapest last week, this new guitar piece, and it was in a very small room with a lot of soundproofing and there were big speakers, and you really heard the speakers instead of the acoustic/architectural aspects of the space. You didn’t hear the room sound because there wasn’t any room sound. It was completely dead. But the ceiling was very low and it had metal panels with fans and and everything rattled – you couldn’t hear the piece because the whole room was rattling. I simply had to fade that piece out and fade in another piece and play a completely different programme. I did a sound check and I thought maybe it was going to be OK, but then the actual performance simply wasn’t OK.

What sort of normal setup do you go for with speakers – how many, placement, that sort of thing?

Well I always ask for speakers in the four corners of the space, and big speakers. It’s frequently a problem that there isn’t enough power available because the sound is so continuous that there’s no chance for the amps to take a break. Where you have some thing with a heavy beat there’s space in between that beat for the most part and I’ve had amplifiers that just got too hot and just cut out for several seconds and would come back in and cut out again later. And I did a concert recently where the speakers were quite good, it was in a church, it was quite a big church, and usually the church is really good because the reverb of the space is so good that the music sounds great even if the sound level isn’t quite so loud. But the speakers simply distorted when I got the right level, so strange.

What is the right level for most of your music?

I’m setting things peaking at about between 110 and 115 dB and I normally set up the sound system with a sound level meter so I’m listening but I’m also pushing it up to that level and then seeing how it goes. In some rooms, the room is simply too tight. I think in Budapest for instance because you really heard the speakers and they were right in front of you that it was simply too loud, so I probably was maybe 5dB less loud. But it still was quite loud generally!

And that’s obviously so you can hear the overtones and the beating and difference tones?

You really hear a completely different overtone pattern at the louder levels. There’s one piece in particular, from 1974, and for cello, and it’s only eight channels and not all eight channels are on all the time either. When it’s loud enough you hear the overtones and you lose the cello completely and when you turn it down you just hear cello. It’s just the best example of what happens with different loudness levels.

Often in the performances, certainly the ones I’ve seen, you also use a live musician playing the same instrument as on the recording and I just wondered what you felt was the purpose of that in a performance situation?

It probably has more to do with simply satisfying the audience! Playing back a recording, I think that it’s harder to convince the audience if they just hear a tape that it’s a valid performance somehow. Generally what happens if there aren’t musicians is that I play a computer part myself, so I sit at the table on stage and I play an additional eight channels and I change the pitch. So I’m sitting there and interacting with the music or appearing to interact, but if I turn my part completely down (and leave the recording level up) and continue to sit there and play or do email or something it doesn’t make any difference really in the sound because it’s whatever is there in the recording that counts.

Is it important in that sense to be able to hear what the live player is doing? Are they affecting the sounds in any way or is it just literally for the visual aspect?

In general they do affect what’s happening and they affect it especially in areas close to where they are in the space, and if they’re not (amplified) in the mix itself. So that it does work. I mean there is a reason for their being there and they do make a difference and that’s very interesting so I shouldn’t pooh-pooh it too much! And some players are very sensitive to what they are doing and what the effect is in the space. I have the pleasure of working with many musicians over a long period of time (from 1975, for instance), and that is a special pleasure for me.

What do you ask them to play?

Generally I work with people who know the music or the piece that they are playing so that tends to work more intuitively. But if I work with people who don’t, then I ask them to play the tones that they hear in the recording and be slightly off so they can themselves hear the beats and tell what’s happening with the overtones.

Then again you wouldn’t ever specify you know ‘1’36 – start playing’?

In general no…

Is that because you want more of a free relationship with the recorded version?

I don’t know that I have a good answer for that!

Do you prefer an intuitive approach from performers in that case? You don’t want them to learn the piece?

Anybody who was interested in the music wouldn’t play it the same way twice anyway so I don’t think that’s a problem.

I suppose just speaking from my own experience when we did that concert in Huddersfield last year. It was the first time that I’d played the piece and I felt that I had to listen through it quite a few times trying to work out where the pitch changes happened and trying to have that as a rough guide to the shape of the piece I suppose. But with that in mind, are there incorrect ways of playing the piece if it starts with a single tone, presumably you want that single tone or very near tunings of it to be the only things that you can hear. Is that fair enough?

Yes that sounds good, I mean certainly there are many bad ways to play my music and I’ve had some horrible experiences where there wasn’t enough rehearsal or where people simply didn’t get it so they didn’t understand what they had to do and they’d start improvising whatever they want to on top of the piece. They consider it a sound piece they should improvise over. This happened very recently with someone in the US and we arrived late at the gig and there simply wasn’t time to rehearse and she played and I was amazed that she had a complete misunderstanding of what she should do. She did exactly that, she was just improvising over the piece.

In general you would prefer them to follow the structure of the piece and actually try to match the pitches they are selecting in particular?

Yes, and especially not to either play other notes or to play too fast (not to play the tones with a long duration). The idea is to stay with that sort of steady long tone process stuff. I always find it hard to explain that to musicians. I think, being not a musician myself, that the musicians who are really good just hear the music and they really have a sense of what they should do and they do a great job.

Let me say one more thing about the last section, which is sort of implied, but I never really made the statement. I think that this (my) music sounds incredibly different in every different performance situation and so it’s not canned – it’s recorded yes, but it’s not canned because every aspect of the performance situation – the acoustics of the space, how big it is, how reverberant it is, plus the sound system, even where you (the audience) sit in the space. I mean there are many places in the space where it sounds so different than other places in the space that it’s just amazing and if everybody wandered around (during the concert) which audiences generally don’t like to do, especially if there are films on, it would be a much richer experience in terms of sensing the differences that occur. So it’s very much architectural / acoustic music that’s occurring and it changes really drastically with every performance space / situation.

I suppose that brings us on to the issue of writing just for live performers, which you’ve done certainly with the orchestra piece Disseminate (1998), and I wondered how your approach changes when you are working with musicians purely in a live situation?

In some sense the score of course exists in the same manner as the old multi-track tape scores, so that instead of my realising it by dubbing a tape together, the orchestra is realising it by playing it. It’s a problem of working with musicians who have a sense of what my music is. One aspect of playing this music in Ostrava was that the orchestra is used to playing Dvorak and, I think, they really didn’t much like the contemporary stuff, not even really where it was sounding more like classical music. They didn’t know how to deal with dynamics and stuff and they tended to want to make more swells. If you listen to the recordings of Three Orchids (2003), a piece for three orchestras, the difference the musicians make is very obvious. There are four recordings of performances: the original premier in Ostrava; another in Ostrava a year later; one from the MaerzMusik Festival in Berlin; and one from Merkin Hall in New York. The first three were played by the Janacek Philharmonic of Ostrava. The last with a smaller number of New York professional musicians who play all kinds of music and they were amazing because they all know exactly what should happen in my music and they really played super, with an incredibly small amount of rehearsal and we got actually a seven channel surround sound recording from that concert. I hope that it will be on a DVD at some point as surround sound. The MaerzMusik / Berlin recording was with three orchestras and with three conductors, which Petr Kotik, one of the conductors and the producer, was really objecting to. All other performances were with Petr conducting all three orchestras, alone. There are all these swells and dynamic changes, so it’s interesting to listen to the two versions with such a different result.

So what do you actually give the players in terms of notation?

There is a score, in standard notation, for all the orchestra pieces and there are now four of them. The microtones are indicated next to the note figure, with arrows pointing up or down, showing the range of sharpness or flatness

One of the problems that I’ve always had is that Petr Kotik (the conductor and producer of the orchestra pieces) doesn’t rehearse enough. He always says that the musicians will get too tired and they can’t go on to the next rehearsal etc. if they play continuously for a long time. But I think that he doesn’t rehearse enough for them to really get a sense of what it should sound like and he should go through the whole piece at least once because it isn’t about reading the notes it’s about sensing how one deals with it within the orchestra. The last recording on the CD I gave you is the piece written for two orchestras and what Kotik did that year (2005), the third time that the festival occurred, was to bring a bunch of musicians from elsewhere and to essentially form another orchestra. The core musicians were the ones he plays with in New York and the other musicians come from various other countries and they’re all very together – they’re really hip and so it works very much better than using the Janacek Philharmonic. The concertmeister of that group, the Ostrava Banda, had played all three of my orchestra pieces within that year! So he was amazingly practiced in my music.

In terms of the notation you give them, do they have a stopwatch or do you give them particular frequencies?

Kotik uses a technique that I think came from Cage , where he lifts his arms over his head and then gradually brings one or the other arm down to his thigh, over one minute. He has a stopwatch but the orchestra only watches him and he tends to vary the duration a little bit. One of the instructions they have is not to change the note immediately at the end of the minute but to feather it so that different people start to do note changes at different times, so that there is a continuity, rather than a sudden change of note every minute.

So the score is actually fairly fixed, so that is very different to the situation with your concerts where you perform and have a live musician playing with the recorded sound. And in the orchestra pieces they are given a score essentially?

They are given a score but in a way it’s the same – that if they don’t understand the context they won’t play it well. And that was the always the problem. It was great to do those pieces, but when they were played in Ostrava generally it was not played perfectly. And in the case of Three Orchids, when it was played in New York it was played by people who know how to play it and even without much rehearsal it sounded really great.

Does the music still work at what I am presuming is a lower volume level, and with mixed timbres as well?

Well yes and no. It’s more of a spectacle to have a big orchestra! No wonder those guys (those orchestral composers) always wanted to have a big orchestra! It’s much more subtle – in fact probably the recording (if it’s done well) sounds better than the live thing (because it can be played louder). And of course where it sounds best is in the front and middle of the orchestra, where the microphones are normally placed. The audience is too far away and cannot hear the music as well as the microphones.

Have you tried repeating your normal concert situation of you playing your CD recorded version with a live instrument, doing that with the orchestra, so you have a recorded sound with the orchestra to get that level of sound, the volume level you require?

Well if you made the recorded sound at all louder than the orchestra you wouldn’t hear the orchestra anyway I think. So it seems like it should be the purity of the orchestra or not. There are a number of instances where quite a few musicians have played with the recorded sound and especially if they are amplified that’s always interesting. And there are some other pieces for smaller ensembles made in the 80s where the musicians basically were tuned by listening with headphones to a four-channel tape which has tuning sine waves on it. They were only playing four notes, but on the other hand they would be separated on the stage. The few who were playing the same note, the same tuning, would be separated and they would probably not play the same note anyway since each one would tune somewhat differently to the tuning tones! It only worked when there were at least a dozen musicians, otherwise it wasn’t loud enough. There was a piece for quartet and stroke rods (especially tuned, long, aluminium rods, about 0.5 inches in diameter, and many of them, played by rubbing along their length with resined fingers) which was Dean Drummond, and New Band was the name of the group. There simply weren’t enough people to attain an effective volume of sound. The recording didn’t sound so bad but the live, acoustic quartet and the stroke rods didn’t sound particularly interesting at all.

Is that an area you want to explore, working with live musicians?

Yeah, but I probably wouldn’t go back to that same thing. I mean the orchestra pieces are interesting to do but the smaller ensembles are not so interesting to do. I think one of the best pieces of mine is basically done the same way. It’s the Five More String Quartets (1991) and it’s made with the musicians tuning to a four-channel tape with headphones so that each one hears a different tone – except that there were five different four-channel tapes! That is, five string quartets on 20 tracks of tape, and all made in five continuous, acoustic recordings of 25 minutes. And so in the multi-track recording, all of which was made in a studio with the four musicians playing at the same time but miked separately enough that they’re pretty much separated, the piece really sounds great I think. And when you play that recording fairly loud then you really hear all of that microtonal interaction. But it’s also a piece that when you don’t play it so loud you can still hear a lot of the overtone patterns. It’s one piece where you can play it at less volume than I would ever think of as concert volume and it does really sound remarkably good.

Does open up the possibility of a quiet Phill Niblock piece?

Well I wouldn’t say quiet! Not so loud! Do you have a recording of that? It might be interesting to try it at a different volumes.

I will do that. I always try and see what my stereo will go up to and what my neighbours will let me play, which are normally not the same thing.

Five or More String Quartets was constructed very much like an orchestra piece, scored in a different way, but meant to be played in a continuous time, acoustically. The structure of that piece became the structure of the first orchestra piece, Disseminate.

Several of the orchestra pieces have now been played and recorded by small ensembles reading the scores and using multitrack recording to build up an orchestra sound, in a fashion very similar to Five or More String Quartets. In a live performance, the recording is played at a volume to match the acoustic sound level of the instruments, which are being played live. Or more likely, the live instruments are being amplified to match the sound level of the recording playback, which gives the volume necessary to produce the overtone richness.

Just to finish with, obviously I’ve not mentioned any of your work as a filmmaker but I know that you started working with film before you worked as a composer. I just wondered how you see the relationship between the way you work as a filmmaker and the way you work as a composer in the first instance?

That’s an oft asked question which I think maybe in recent years I’ve had a slightly better answer for. Normally I’d just say ‘oh, there’s no relationship – you figure it out!’ But essentially it’s a very minimalist approach to both and it’s easier to see in the music. As the music is so abstract that you take out the melody, rhythm and, you know, harmonic progression, and you’re left with that very simple music with a lot of internal life. Whereas in the film I’m also the stripping out a lot of the typical structure of the film. The shots are all quite long so that there’s no cutting, editing time imposed, no montage, and there are relatively few sequences – it mostly goes from one shot to another – completely different place or different activity etc. so I’ve really cut much of the normal structure of film out. Obviously there’s no narrative but on the other hand there’s hardly any of the typical faces of people – I mean documentary filmmakers usually hate it!

Is it in a sense you are just framing events whether they are sounds or images, and just presenting them in a quite bare way I suppose?

Yeah, this bareness, simplicity, starkness, is one thing and I never use really any effects. I only move the camera if something is moving in the frame I’m following. I mean zooms are really, urgh, awful! I used to teach film and the first thing I would do was to tell them that they couldn’t make zooms! I couldn’t stand to watch them!

Thinking more recently do you make the films with knowledge that they are going to be used as part of your music performance? Does that affect how you make the films in any way or are they completely independent?

I don’t make those films any more. That’s a series of films from 1973 until 1991, which is already getting close to 20 years ago. I simply stopped making them. I had a lot of material and I was spending a lot of time and having to raise a lot of money. It was also the time when the funding completely dried up in the United States. I was getting quite good funding from the late 60s until 1990 and after that – phttt – nothing! And so it was very much harder to go out and shoot the films. I did start shooting video which was much cheaper but the travel costs weren’t that much cheaper.

Have you ever used any other visual imagery with your music in performance other than the films of people working?

There’s a series of pieces using very high contrast black and white photographs which I did from roughly 1986 until ‘92 or ‘93 and they are either used as slides and they dissolve with slide projectors or in recent years they are in the computer and they simply dissolve. I do quite a few installations with that material. There’s one running now in a suburb of Chicago and sometimes I’ve done those with the music but for the most part I was never interested in using music with that material. I did one very long installation with three of those images in a very big room – they were six feet wide images – and I used the string quartets which I thought worked as well as anything with those pieces. So it was running as an installation all day long, day after day. But the staff kept turning down the volume of the music.

Just one more question – what’s next? What have you got coming up and what do you feel you still want to explore in your music in the next few years?

In about four days time I’ve finished this 59 minute piece for two guitars. While it was being rehearsed, I did a lot of work on the score for a new piece for Ostrava which is for solo baritone voice, chorus and orchestra. The day after the premiere of the guitar piece, I recorded material for an organ piece. I didn’t make any pieces in 2006 because I made too many pieces in 2003, ‘04 and ‘05: there were 13 new pieces and I just thought it was time I took a break. I did make one sound collage piece but I didn’t make any music. So this guitar piece is actually the first piece since 2005 that I have finished.

Do you think that break has caused you to have a late-Feldman phase and all the pieces are going to be very long now?

Naagh! I can’t imagine the organ piece is going to be more than 20 minutes!